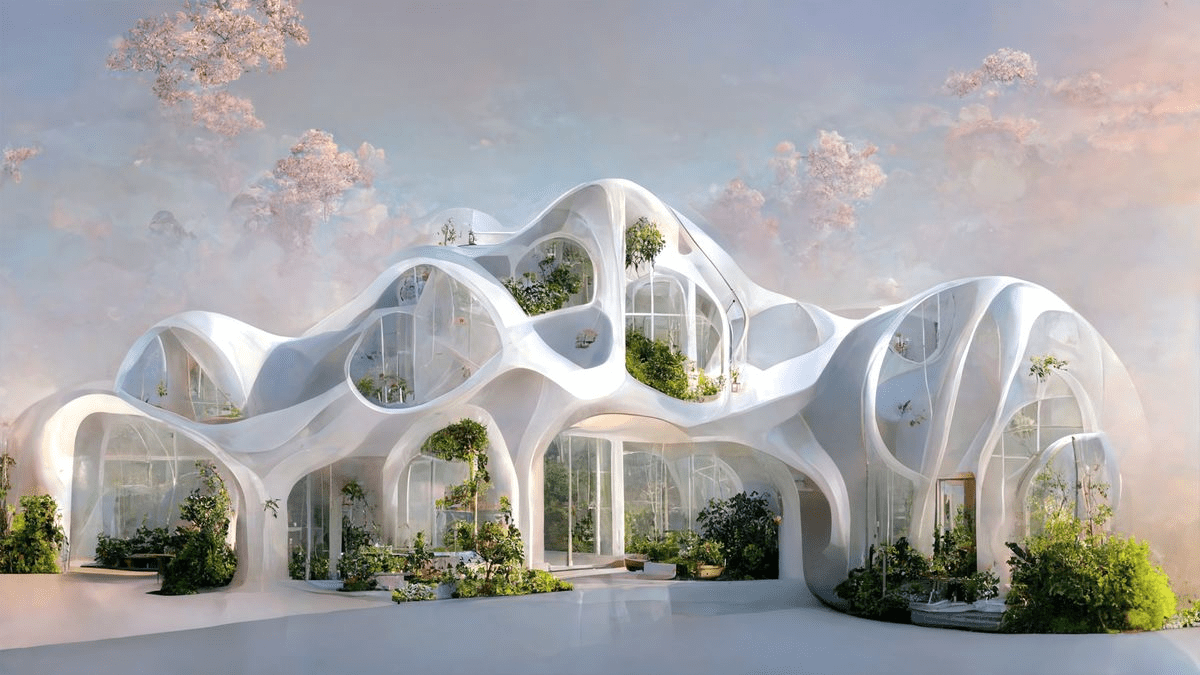

The arrival of generative AI to the scene has deeply shaken the creative industry and opened some previously unimaginable possibilities. Some of the latest tools from this class are capable of producing amazing pictures that match high-resolution photographs in terms of realism or generating images out of the realm of fantasy. All the user needs to do to get an amazing visualization of practically anything is to type a detailed and accurate text description.

Of course, some text-to-image algorithms are better than others, and an elite tier of AI art-generation tools quickly emerged. The three most impressive products from this class are Midjourney, Dall-e, and Stable Diffusion, each of which has drawn effusive praise from users and experts alike. While they are equally powerful, these AI applications are aimed at slightly different uses and there are numerous differences between them.

Table of Contents: hide

What Are the Differences Between Midjourney, Stable Diffusion, and Dall-e?

What is Midjourney?

This AI model for visual creation appeared seemingly out of nowhere in 2022 and quickly became one of the most fascinating toys on the internet. The model is based on advanced deep learning architecture and is extremely efficient in translating natural language commands into sophisticated images. This is possible due to extensive training on a huge number of examples, which essentially teach the algorithm how to infer visual interpretations of textual Midjourney prompts.

Midjourney is currently available only through a Discord bot on the official server of the company and is still considered to be in open beta. There are plans to make it available through a web interface, which would expand its customer base and simplify access for the average user. The AI model is also being gradually refined which allows it to reach an even higher level of performance.

What is Midjourney

Unique features:

- The software creates 4 versions of an image based on the input prompt

- The best of the initial versions can be upgraded to a higher resolution

- Midjourney is known for creating very sharp and detailed images that look highly realistic

- It produces great-looking results even with vaguely defined prompts

- Content moderation policies prevent the generation of violent or explicit imagery

Observed problems:

- It takes a relatively long time (nearly a minute) for the algorithm to generate images

- Midjourney sometimes ignores technical instructions to create a ‘prettier’ image

Pricing: Monthly subscription with three tiers, costing $10, $30, and $60 per month

Further Reading:

What to Do When Midjourney Couldn’t Validate This Link >

How to Fix the Application Didn’t Respond Midjourney >

How to Fix Midjourney Gets Stuck on Waiting to Start >

What is Stable Diffusion?

Many insiders consider installing Stable Diffusion, because they think it is the most advanced deep learning model designed for image generation, and there are plenty of practical examples to support this position. This text-to-image model has demonstrated amazing flexibility and veracity, producing incredibly sharp and vivid pictures in a variety of styles. In addition to image generation, Stable Diffusion can perform a range of other tasks based on visual analysis including in-painting and image-to-image translations.

Unlike most of its rivals, this software is available to researchers through a creative license and can be customized and deployed on local hardware. Thanks to this attitude of the model owners, Stable Diffusion is being constantly fine-tuned by the end users, which positively affects its performance in specific scenarios. On the other hand, it makes it more difficult to objectively evaluate the model as its effectiveness may depend on the parameters of the particular deployment. So how to use Stable Diffusion, and what are its key features?

What is Stable Diffusion

Unique features:

- Neural model based on latent diffusion that can generate images from text or visually Stable Diffusion prompts

- Produces original and highly detailed work that meets the technical requirements of the user

- The software can redraw existing images with contextual changes requested by text input

- It’s possible to directly improve colors, textures, and other visual elements.

- Supports many different implementations with unique interfaces and specializations

Observed problems:

- The algorithm occasionally generates images that are identical to those from its training set

- There are no strict controls preventing violent or sexual images from being generated

Pricing: Basic plan costs $9 per month, Standard plan is offered for $49 monthly, Premium plan costs $149 per month

What is Dall-e?

Dall-e is another top contender for the title of the most advanced AI image generator in the market, and since its 2022 launch, it gained a huge number of happy users. It is developed by Open AI, the same tech company that is behind the viral ChatGPT conversational bot and relies on a similar deep learning architecture based on the Transformer model. This gives the algorithm a stunningly accurate ability to interpret the meaning behind the language in the prompt and produce creative responses that please the eye and meet high technical standards.

A notable trait of this model is its ability to rearrange parts of the image based on limited instructions, which makes it extremely useful for image modification or reconstruction. The model is publically available in the form of an API, and there are already several implementations including the Image Creator tool in Bing. This makes Dall-e one of the most easily accessible AI tools for image generation.

What is Dall-e

Unique features:

- Transformer-based generative AI algorithm for translating text prompts into visual images

- The algorithm can produce photorealistic images in a wide variety of styles

- AI model scores very high on visual reasoning tests designed for humans

- Capable of showing the same scene from different vantage points

- It can expand an existing image beyond its original borders in a consistent way

Observed problems:

- The algorithm often fails to establish proper relations between multiple objects in the image

- Dall-e is poorly suited for handling scientific images that depend on the exactness

Pricing: $0.02 per image with 1024 x 1024 resolution

What Are the Differences Between Midjourney, Stable Diffusion, and Dall-e?

Looking just at the images produced by the three aforementioned AI models, one could think they are completely interchangeable but that’s far from the truth. They were each developed independently and while they share some general deep learning principles, they utilize vastly different architectures. In other words, each of these algorithms is trained uniquely and relies on different methodologies to analyze the prompts and generate images.

This structural divergence explains why each of the three models performs differently with the same prompt and may experience difficulties with different aspects of the image generation task. Another key difference is how the algorithms are deployed, with Stable Diffusion being available for local installation and the other two products being completely cloud-based. Finally, their pricing models are not the same, which becomes very important if you are considering professional use.

Which AI Image Generation Software Is the Best?

The only way to truly compare the output of Midjourney vs. Stable Diffusion vs. Dall-e is through extensive testing, and so far most evaluations find that each of the three has its set of advantages. Midjourney might produce the best-looking images even without sophisticated prompts, but Stable Diffusion will act more consistently and make fewer errors. On the other hand, Dall-e is likely to make the most accurate semantic interpretation and interpolation judgments.

Another factor to take into account is that all three models are being continuously refined and the most glaring downsides are promptly addressed. Thus, selecting the best AI image generation software at this early point is premature and may fail to account for model updates and fine-tuning for specific tasks.

Frequently Asked Questions about AI Art Tools

1. Do image generation algorithms truly understand verbal instructions?

Despite the appearance of cognizance of spoken language, AI models are merely statistical constructs and they lack the general intelligence required for understanding complex visual ideas. They can act based on the instructions with incredible accuracy, but that doesn’t imply true comprehension.

2. Can the images created with generative AI tools include real persons and places?

Since AI models are built using large publically available datasets, they can reproduce people’s likenesses and real-world locations. They are also able to amalgamate multiple individuals or places into original visualizations that don’t represent anything from the real world.

3. What are the copyright implications of using visual AI tools like Midjourney?

This is currently a gray area, as multiple aspects of AI algorithm training and image generation rely on using third-party content without explicit permission. Another issue is whether it’s OK to submit AI-made images to visual contests and claim authorship over them. Such issues will likely be clarified in the near future and strict boundaries for professional use of AI tools will be precisely defined.

Final Thoughts

Over the past 12 months, we have witnessed an unprecedented spike in the power level of generative AI tools, including visually oriented models. In particular, Dall-e, Midjourney, and Stable Diffusion have outperformed expectations by a huge margin and now approach the quality of human artists. While all three models remain works in progress and still make plenty of silly mistakes, their ability to nearly instantly create stunning images based on simple textual descriptions is a game changer that may disrupt creative industries and the entire media ecosystem.